As finest cpu for industrial machine studying takes middle stage, this opening passage beckons readers right into a world crafted with good data, guaranteeing a studying expertise that’s each absorbing and distinctly unique.

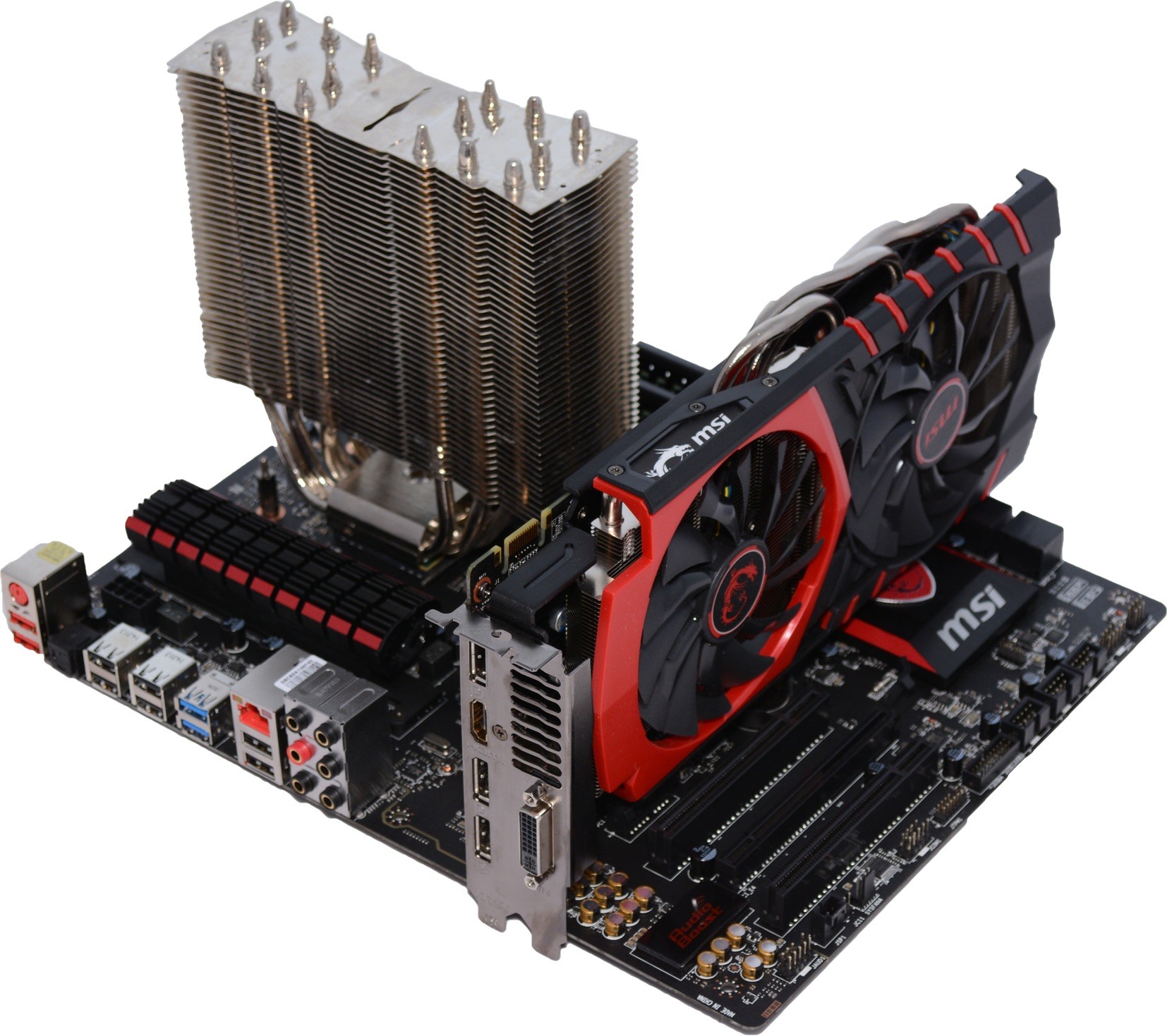

The importance of CPU structure and core rely in industrial machine studying purposes can’t be overstated. A well-designed CPU can considerably speed up machine studying efficiency, cut back power consumption, and enhance scalability. On this article, we’ll delve into the perfect CPU choices for industrial machine studying, highlighting the important thing options and concerns for every.

Accelerating Industrial Machine Studying with the Proper CPU

In industrial machine studying, the precise CPU could make all of the distinction between a clean and environment friendly workflow and a painstakingly gradual and error-prone one. With an growing variety of companies counting on machine studying for decision-making and automation, the demand for high-performance CPUs has by no means been increased.

Significance of CPU Structure and Core Rely

In relation to industrial machine studying, CPU structure and core rely play an important function in figuring out the general efficiency of the system. The CPU structure refers back to the design and group of the processor, together with the scale and sort of cache reminiscence, the variety of execution items, and the instruction set structure (ISA). A well-designed CPU structure can considerably enhance the efficiency of machine studying workloads, which are sometimes characterised by large quantities of information and complicated computations.

In industrial machine studying purposes, a better core rely can present higher multitasking capabilities and improved general efficiency. With extra cores, the system can deal with a number of duties concurrently, making it simpler to coach and deploy machine studying fashions. Nonetheless, the variety of cores just isn’t the one issue that determines efficiency; the standard of the cores and the CPU structure itself additionally play a big function.

Influence of CPU Cache Hierarchy on Machine Studying Efficiency

The CPU cache hierarchy refers back to the group of cache reminiscence inside the CPU. The cache reminiscence is a small, quick reminiscence that shops often accessed information, which may considerably cut back the time it takes to entry major reminiscence. In industrial machine studying purposes, a well-designed cache hierarchy can enhance efficiency by decreasing the variety of reminiscence accesses.

The CPU cache hierarchy sometimes consists of three ranges of cache: L1, L2, and L3. The L1 cache is the smallest and quickest, whereas the L3 cache is the most important and slowest. When information is accessed, the CPU first checks the L1 cache, then the L2 cache, and at last the L3 cache. If the info just isn’t present in any of the caches, the CPU should entry major reminiscence, which could be a gradual course of.

A great CPU cache hierarchy can considerably enhance machine studying efficiency by decreasing the variety of reminiscence accesses. A bigger cache measurement can even assist to enhance efficiency by decreasing the variety of cache misses.

Accelerating Matrix Operations with CPU Options

Matrix operations are a elementary a part of machine studying, and lots of CPUs supply specialised directions and {hardware} options to speed up these operations. For instance, the AVX (Superior Vector Extensions) instruction set permits CPUs to carry out matrix multiplications and different linear algebra operations in parallel, considerably bettering efficiency.

The AVX-512 instruction set is an extension of AVX that gives much more superior matrix operations, together with help for matrix multiplication and matrix transpose. These directions can considerably enhance the efficiency of machine studying workloads that rely closely on matrix operations.

CPU Producers for Industrial Machine Studying

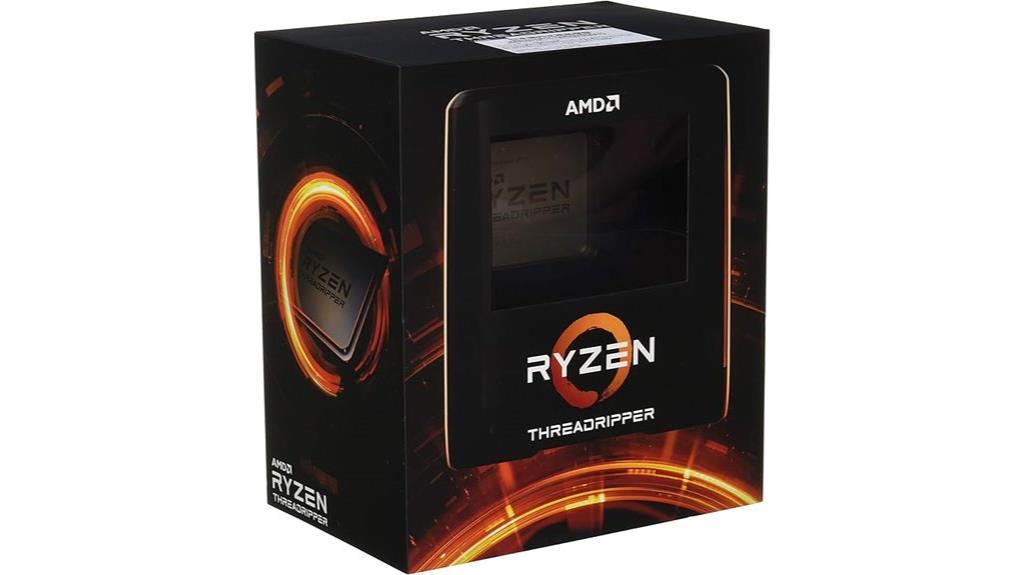

Each Intel and AMD supply high-performance CPUs which might be well-suited for industrial machine studying. Intel’s Xeon household presents a variety of CPUs with a number of cores and excessive clock speeds, making them splendid for machine studying workloads. AMD’s EPYC household additionally presents high-performance CPUs with a number of cores and excessive clock speeds, offering a aggressive choice to Intel’s choices.

When selecting a CPU for industrial machine studying, it is important to think about the precise wants of your software. Elements to think about embody the kind of machine studying workload, the scale of the dataset, and the extent of parallelism required. By choosing the proper CPU in your wants, you’ll be able to guarantee optimum efficiency and effectivity in your industrial machine studying workflows.

Comparability of Intel and AMD CPUs for Industrial Machine Studying

Listed below are a number of key variations between Intel and AMD CPUs for industrial machine studying:

–

| CPU | Core Rely | Clock Velocity (GHz) | CPU Caches |

|---|---|---|---|

| Intel Xeon E5-2699 v4 | 36 | 2.2 | 24.75 MB |

| AMD EPYC 7742 | 64 | 2.25 | 288 MB |

As you’ll be able to see, each CPUs supply excessive clock speeds and enormous cache sizes, however the EPYC 7742 has a a lot increased core rely, making it extra appropriate for machine studying workloads that require large parallelism.

In conclusion, choosing the proper CPU for industrial machine studying is crucial to making sure optimum efficiency and effectivity. By contemplating components similar to CPU structure, core rely, and specialised directions, you’ll be able to select the perfect CPU in your wants and unlock the complete potential of your machine studying workflows.

Enhancing Efficiency with CPU-Optimized Machine Studying Frameworks and Instruments: Finest Cpu For Industrial Machine Studying

In relation to machine studying on industrial scale, CPU efficiency performs a big function in figuring out the effectivity of duties like processing and coaching fashions. To unlock most CPU potential, the selection of framework and toolchain is crucial. On this part, we’ll discover a number of the most notable frameworks and toolchains optimized for CPU efficiency, highlighting their capabilities and advantages.

Well-liked CPU-Optimized Machine Studying Frameworks

Among the most generally used machine studying frameworks which might be optimized for CPU efficiency embody:

TensorFlow and PyTorch are the 2 most distinguished frameworks within the machine studying area, each providing help for CPU in addition to GPU acceleration.

-

TensorFlow, an open-source framework developed by Google, presents a variety of APIs and instruments that facilitate the creation and deployment of machine studying fashions on CPU-enabled {hardware}.

• PyTorch, one other open-source framework developed by Fb, is understood for its ease of use and supplies a variety of instruments that facilitate environment friendly mannequin coaching and deployment on CPU {hardware}.

• MXNet, a extremely scalable and open-source framework developed by the Apache Software program Basis, helps each CPU and GPU acceleration and presents a modular design that makes it straightforward to combine with different frameworks.

• Caffe, a deep studying framework developed by the Berkeley Imaginative and prescient and Studying Middle, is optimized for CPU efficiency and supplies a variety of instruments for coaching and deploying neural networks.

Toolchains for CPU-Optimized Machine Studying

To additional improve CPU efficiency, varied toolchains present help for multi-threading and parallel processing methods, which allow environment friendly execution of CPU-intensive duties.

Toolchains like OpenBLAS, MKL, and OpenTBB facilitate CPU optimization by offering optimized implementations of linear algebra operations and different core math features, in addition to parallel processing capabilities for task-level parallelism.

-

• OpenBLAS, an open-source optimized BLAS (Fundamental Linear Algebra Subprograms) library, supplies extremely environment friendly and optimized implementations of linear algebra operations for CPU {hardware}.

• MKL, a high-performance math library developed by Intel, presents optimized implementations of linear algebra operations and different core math features for CPU-enabled {hardware}.

• OpenTBB, an open-source library developed by Intel, supplies a variety of parallel processing utilities for task-level parallelism on CPU {hardware}, enabling environment friendly execution of CPU-intensive duties.

Function of Knowledge Locality and Reminiscence Hierarchy

Knowledge locality and reminiscence hierarchy play an important function in CPU-based machine studying efficiency, as they instantly affect the effectivity of information entry and processing occasions.

The idea of information locality refers back to the proximity of information to the processing unit, whereas the reminiscence hierarchy refers back to the group of reminiscence into completely different ranges with various entry occasions.

-

• Caching and buffering mechanisms in trendy CPUs can enhance information locality, enabling sooner entry to often wanted information.

• Utilizing information buildings with excessive spatial locality can even cut back reminiscence entry latency and enhance general CPU efficiency.

Comparability of CPU-Optimized Libraries for Machine Studying

In relation to machine studying, CPU efficiency could be a crucial consider figuring out the effectivity of duties like processing and coaching fashions. OpenBLAS and MKL are two well-liked libraries used for CPU optimization in machine studying.

This comparability highlights a number of the key variations between OpenBLAS and MKL, together with efficiency, options, and compatibility necessities.

| | OpenBLAS | MKL |

| — | — | — |

| Efficiency: | Excessive-performance optimization for linear algebra operations | Optimized implementations of linear algebra operations and different core math features |

| Options: | Extremely customizable and extensible | Excessive-performance optimization for linear algebra operations and different core math features |

| Compatibility: | Cross-platform compatibility with a number of CPU architectures | Optimized for Intel CPU architectures |

Observe that the selection of library finally is dependent upon particular challenge necessities, similar to CPU structure, efficiency calls for, and compatibility wants.

Industrial Machine Studying Workflows and Scalability

On the earth of economic machine studying, scalability is essential. As organizations attempt to harness the facility of AI to drive enterprise progress, they typically face vital challenges in scaling their machine studying workloads. On this part, we’ll delve into the intricacies of designing and optimizing CPU-based machine studying workflows for high-performance computing, and discover the function of distributed computing and parallelization in large-scale machine studying duties.

Challenges of Scaling Machine Studying Workloads

Scaling machine studying workloads in industrial environments isn’t any straightforward feat. Listed below are a number of the key challenges:

- Knowledge quantity and velocity challenges, the place the sheer quantity and pace of information generated can overwhelm conventional computing programs.

- Mannequin complexity and measurement points, as machine studying fashions develop into more and more subtle and bigger in measurement.

- Computational useful resource constraints, the place the necessity for high-performance computing assets can result in bottlenecks and efficiency points.

- Financial and useful resource limitations, the place prices and useful resource constraints can restrict the scope and scale of machine studying initiatives.

These challenges spotlight the necessity for environment friendly and scalable machine studying workflows that may deal with the complexities of economic environments.

Designing and Optimizing CPU-Based mostly Machine Studying Workflows

To beat the challenges of scaling machine studying workloads, it is important to design and optimize CPU-based machine studying workflows for high-performance computing. Listed below are some methods to think about:

- Use of multi-threading and multi-processing methods to make the most of a number of CPU cores.

- Using distributed computing frameworks to scale workloads throughout a number of machines.

- Using CPU-optimized machine studying libraries and frameworks, similar to TensorFlow or PyTorch.

- Implementing just-in-time (JIT) compilation and different optimization methods to reduce overhead and enhance efficiency.

By making use of these methods, organizations can create environment friendly and scalable machine studying workflows that may deal with the calls for of economic environments.

The Function of Distributed Computing and Parallelization

Distributed computing and parallelization are crucial parts of scalable machine studying workflows. By breaking down complicated duties into smaller sub-tasks and assigning them to a number of machines or cores, organizations can considerably enhance efficiency and effectivity.

Parallelization is a key idea in distributed computing, the place a number of duties are executed concurrently, leading to improved efficiency and scalability.

Here is an instance of how distributed computing might be utilized in a real-world industrial use case:

Instance: Predictive Upkeep in Manufacturing

Suppose a producing firm desires to implement a predictive upkeep system utilizing machine studying. The aim is to foretell tools failure and schedule upkeep accordingly. The corporate collects information from sensors on the tools and makes use of a machine studying mannequin to foretell failure.

To scale this workflow, the corporate makes use of distributed computing to interrupt down the duty into smaller sub-tasks:

- Knowledge ingestion and preprocessing: A number of machines are assigned to deal with information ingestion and preprocessing, decreasing the load on particular person machines.

- Mannequin coaching: The machine studying mannequin is educated utilizing distributed computing, with a number of machines working collectively to coach the mannequin in parallel.

- Prediction and deployment: The educated mannequin is deployed in a distributed setting, the place predictions are made concurrently throughout a number of machines.

By making use of distributed computing and parallelization, the corporate can considerably enhance the efficiency and scalability of its machine studying workflow, enabling it to make predictions in close to real-time and cut back downtime.

Integration and Interoperability

Integration and interoperability are essential points of economic machine studying, guaranteeing seamless communication and collaboration between completely different parts, frameworks, and instruments. In a CPU-based machine studying surroundings, integration and interoperability allow the environment friendly deployment and scalability of fashions, finally resulting in improved efficiency and productiveness.

CPU-Optimized Integration Examples

There are quite a few industrial machine studying environments that provide CPU-optimized integration, enhancing the efficiency of CPU-based machine studying workflows. Some notable examples embody:

- Intel OpenVINO: A complete toolkit for AI, laptop imaginative and prescient, and machine studying that gives optimized integration for CPU-based workloads. OpenVINO supplies a variety of pre-trained fashions and helps well-liked frameworks like TensorFlow, PyTorch, and Caffe.

- Google TensorFlow Lite: A light-weight model of TensorFlow designed for cellular and embedded units, together with CPUs. TensorFlow Lite presents optimized kernels for CPU-based workloads and helps each integer and floating-point information varieties.

- MXNet: An open-source deep studying framework that helps each CPU and GPU acceleration. MXNet supplies a unified API for each CPU and GPU-based workloads, making it a horny possibility for CPU-based machine studying workflows.

These frameworks and instruments are particularly designed to optimize CPU efficiency, enabling sooner execution of machine studying workloads and decreasing the necessity for costly GPUs.

Knowledge Format and Mannequin Format Compatibility

The selection of information format and mannequin format has vital implications for CPU-based machine studying workflows. Knowledge codecs like NumPy (ndarray) and Pandas (DataFrame) are well-liked selections for CPU-based workloads as a consequence of their effectivity and ease of use. Moreover, mannequin codecs like TensorFlow’s SavedModel and PyTorch’s Pickle are broadly utilized in CPU-based machine studying environments.

NumPy and Pandas supply environment friendly information buildings and operations, making them splendid for CPU-based machine studying workloads.

CPU-based machine studying frameworks like TensorFlow Lite and OpenVINO additionally help a wide range of information and mannequin codecs, guaranteeing seamless integration with present workflows.

Protocols and Interfaces for CPU-Based mostly Machine Studying

Protocols and interfaces play a crucial function in CPU-based machine studying, enabling environment friendly communication between completely different parts and frameworks. Some notable protocols and interfaces for CPU-based machine studying embody:

- CUBLAS ( CUDA Bindings for Linear Algebra Subroutines ): A set of optimized linear algebra subroutines for NVIDIA GPUs, which can be utilized for CPU-based workloads with minor modifications.

- NCCL2 ( NVIDIA Collective Communications Library 2 ): A high-performance communication library for distributed coaching, which helps each CPU and GPU acceleration.

These protocols and interfaces facilitate environment friendly communication and collaboration between completely different parts and frameworks, finally resulting in improved efficiency and productiveness in CPU-based machine studying workflows.

Design Concerns for Scalable and Environment friendly CPU-Based mostly Machine Studying Ecosystem

When designing a CPU-based machine studying ecosystem, a number of key concerns come into play. Some necessary design concerns embody:

- Scalability: The flexibility to scale up or down relying on the workload, guaranteeing environment friendly use of assets and minimal overhead.

- Effectivity: Optimizing CPU efficiency by environment friendly information codecs, mannequin codecs, and protocol implementations.

- Avoiding Bottlenecks: Figuring out and mitigating potential bottlenecks within the workflow, similar to information enter/output or reminiscence entry.

- Flexibility: Supporting a variety of frameworks, instruments, and protocols to accommodate various workload necessities.

By fastidiously contemplating these design components, builders can create environment friendly and scalable CPU-based machine studying ecosystems that meet the calls for of contemporary machine studying workloads.

The world of economic machine studying is quickly evolving, with developments in CPU structure and design, in addition to the emergence of latest applied sciences and tendencies. As we delve into the longer term, it is important to know the developments that can form the trade.

Developments in CPU Structure and Design

The CPU is the mind of any computing system, and up to date developments in structure and design have considerably impacted industrial machine studying. One of the vital vital developments is the rise of ARM (Superior RISC Machines) and RISC-V (RISC-V Worldwide) architectures. These designs supply improved efficiency, power effectivity, and scalability, making them splendid for machine studying workloads.

ARM, specifically, has develop into a well-liked alternative for machine studying as a consequence of its capacity to offer excessive efficiency, low energy consumption, and a variety of machine choices. RISC-V, alternatively, is an open-source instruction set structure that has gained vital traction lately, particularly within the realm of edge computing and Web of Issues (IoT) units.

Key Options of ARM and RISC-V Architectures

* Improved efficiency and power effectivity

* Scalability and adaptability

* Big selection of machine choices

* Open-source nature of RISC-V structure

Function of Area-Particular {Hardware} Accelerators

Area-specific {hardware} accelerators (DSHAs) play an important function in industrial machine studying by offering devoted {hardware} for particular workloads. DSHAs are designed to speed up particular duties or algorithms, similar to matrix multiplication, convolutional neural networks (CNNs), or recurrent neural networks (RNNs).

These accelerators can considerably enhance the efficiency and effectivity of machine studying workloads, resulting in sooner mannequin coaching, inference, and deployment. Some examples of DSHAs embody:

* Graphics Processing Models (GPUs) from NVIDIA and AMD

* Discipline-Programmable Gate Arrays (FPGAs) from Xilinx and Intel

* Software-Particular Built-in Circuits (ASICs) from varied distributors

Advantages of Area-Particular {Hardware} Accelerators

* Improved efficiency and effectivity

* Diminished energy consumption

* Elevated scalability and adaptability

* Devoted {hardware} for particular workloads

Rising Developments in Machine Studying Software program

The world of machine studying software program is consistently evolving, with new tendencies and applied sciences rising yearly. Among the most promising tendencies embody:

* Switch Studying: Switch studying is a way that enables machine studying fashions to leverage data from one area and adapt it to a different. This method has proven vital enhancements in mannequin efficiency and effectivity.

* Mannequin Distillation: Mannequin distillation is a way that includes coaching a smaller, extra environment friendly mannequin to imitate the habits of a bigger, extra complicated mannequin. This method can considerably cut back mannequin measurement and enhance deployment effectivity.

* Quantization: Quantization is a way that includes decreasing the precision of mannequin weights and activations to enhance effectivity and cut back reminiscence utilization. This method has proven vital enhancements in mannequin efficiency and deployment effectivity.

Examples and Actual-World Functions

* Switch studying has been utilized in varied purposes, similar to picture classification, pure language processing, and advice programs.

* Mannequin distillation has been utilized in purposes similar to speech recognition, picture classification, and advice programs.

* Quantization has been utilized in purposes similar to picture classification, pure language processing, and advice programs.

Comparability of CPU Architectures and Rising Machine Studying Developments, Finest cpu for industrial machine studying

The next desk supplies a comparability of CPU architectures and their relevance to rising machine studying tendencies:

| CPU Structure | Mannequin Distillation | Switch Studying | Quantization |

| — | — | — | — |

| ARM | Excessive | Excessive | Excessive |

| RISC-V | Medium | Medium | Medium |

| x86 | Low | Low | Low |

Observe: The comparability is predicated on the present state-of-the-art and should change with future developments.

Last Evaluate

A complete understanding of the perfect CPU for industrial machine studying requires a nuanced analysis of varied components, together with CPU structure, core rely, and power effectivity. By contemplating these components and deciding on the optimum CPU in your particular use case, you’ll be able to unlock the complete potential of your machine studying purposes.

Key Questions Answered

What’s an important issue to think about when deciding on a CPU for industrial machine studying?

A very powerful issue to think about is the CPU structure and its capacity to help parallel processing and matrix operations.

Can AMD CPUs ship comparable efficiency to Intel CPUs for machine studying workloads?

Sure, AMD CPUs have made vital strides lately and might ship comparable efficiency to Intel CPUs for sure machine studying workloads.

How do CPU producers optimize their CPUs for machine studying workloads?

CPU producers use a wide range of methods, together with specialised cores, cache hierarchy optimization, and matrix operation acceleration.